Ethical concerns and potential risks of AI use in the medical field

AI-powered tools, such as Carepatron’s AI scribe, enhance clinical efficiency by transcribing conversations, summarizing patient notes, and generating documentation. These tools reduce administrative workload, allowing healthcare providers to focus more on patient care. However, integrating AI in healthcare raises several ethical concerns and potential risks, particularly regarding data privacy, accuracy, bias, and patient autonomy.

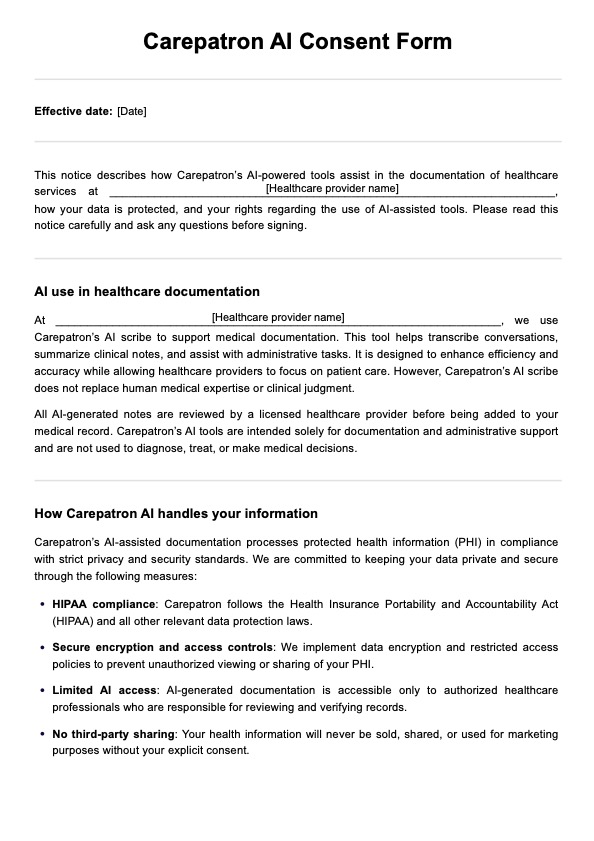

Patient data privacy & HIPAA compliance

AI models process vast amounts of sensitive health data, prioritizing data security and confidentiality. Under the Health Insurance Portability and Accountability Act (HIPAA), healthcare providers must ensure that protected health information (PHI) is stored, transmitted, and processed with strict safeguards. Key considerations include:

- Data encryption: AI tools must use encryption protocols to protect PHI during storage and transmission.

- Access control: Only authorized personnel should be able to access AI-generated records.

- Third-party compliance: Any AI tools integrated into healthcare workflows must comply with HIPAA regulations to prevent unauthorized access or misuse of patient data.

Failing to maintain HIPAA compliance can lead to legal consequences, data breaches, and loss of patient trust.

Accuracy and reliability

While AI assists with documentation and decision-making, it cannot replace human intelligence. AI-generated outputs are based on patterns in training data rather than clinical judgment, which means they may contain errors, misinterpretations, or missing context. To ensure reliability:

- A licensed healthcare professional should always review AI-generated notes and summaries before adding them to a patient’s record.

- Automated decision support should be used as a guide, not a replacement for clinical expertise.

- AI tools must undergo continuous training and improvement to reduce inaccuracies.

Overreliance on AI without proper verification can lead to medical errors, misdiagnoses, or inappropriate treatments, underscoring the need for human oversight.

Bias in AI models

AI models are trained on large datasets, but these datasets can inadvertently reflect biases, which may impact clinical decisions. Bias in AI models can arise from:

- Imbalanced training data: If AI models are trained on data that underrepresents specific demographics, they may produce less accurate recommendations for those groups.

- Historical biases: AI tools may inherit biases present in traditional medical practices or historical patient records, perpetuating healthcare disparities.

- Algorithmic misinterpretation: AI may emphasize specific data points while ignoring critical contextual factors that human providers would naturally consider.

Patient autonomy

Patients can make informed choices about their healthcare, including whether they consent to AI-assisted documentation. Transparency in AI use is essential to maintaining patient trust. Healthcare providers should:

- Clearly explain how AI tools will be used in patient care.

- Provide patients with an opt-out option, allowing them to request human-only documentation.

- Address concerns about data security, accuracy, and AI-generated recommendations.

- Ensure that patients understand that AI does not replace human medical decision-making.

Healthcare providers can foster trust, transparency, and ethical AI adoption by prioritizing informed consent and clear communication.